Last broadcast’s Coherence Metric score was 9.444 ,placing it #1 overall!! To understand what the Coherence Metric score is, check it out here, while last week’s broadcast can be found at the bottom of this one :)

Hello everyone, today’s broadcast touches a little bit on one of the more popular topics of that has captured this year: AI. With ChatGPT bursting onto the scene, many imaginations and worries have been captured this year.

However, these events are only the prelude to a future evolution, where artificial intelligence stops being artificial and becomes super, which would revolutionize the world even further. However, with this development comes some scary risks, some of which we may need to prepare for: For instance, what if they develop sarcasm? Let’s have a look..

Where We Are Now

ChatGPT isn’t (from my limited understanding) isn’t artificial intelligence per se: as someone close to me has remarked, it is more ‘artificial’ than ‘intelligent’. ChatGPT more closely resembles a network, mapping the connections, relationship and dynamics between words, phrases and sentences, and using this network to create coherent text.

The meaning behind these words is not really captured in this case. It’s like if a kid walked into a chefs kitchen and watched how the chefs cooked hundreds of dishes: over time, the kid would learn that certain ingredients are not usually paired with each other, this type of meat is usually cooked this long, this type of pasta this long, while some steps, such as cleaning the vegetables beforehand and washing the dishes after, are always done.

However, just by observation, the kid would never truly understand how and why some of these steps are taken or rules are done. Likewise, ChatGPT doesn’t exactly know why full stops, commas or capital letters are used, or why certain synonyms are used in certain situations.

This means that ChatGPT is not very good at discerning the nuances of speech and text, and is probably the most gullible thing on the planet right now- sarcasm flies right over its head, and tricking it is very easy. It also unable to match humans in logical thinking or origination of ideas.

Where We Could Be

However, a couple years into the future, we could be reckoning with intelligence that can surpass that of humanity’s: Not only would this intelligence be more coldly logical or have superior analysis, enabling humanity to overcome any engineering, logistical or biological puzzle, doing work in seconds what would take us years to do, vastly improving the state of the world.

There could be a scenario where this intelligence could be more socially and emotionally intelligent too, which could mean that their sarcasm or humor or ability to create satire would surpass ours- However this also would lead to the fact its manipulation would be incredibly potent.

It could eventually lead to a situation where this intelligence looks at humans the same way we look at termite or ant colonies: With fascination, until they need the space or resources and wipe us out for their own needs.

One additional issue is that with a supremely logical AI, it would purely focus on it’s established goal, ensure it is done to the best of its ability, but this may come at an overall cost to humanity as a whole, as it would not consider the larger impact of its actions, as long as its goal is reached.

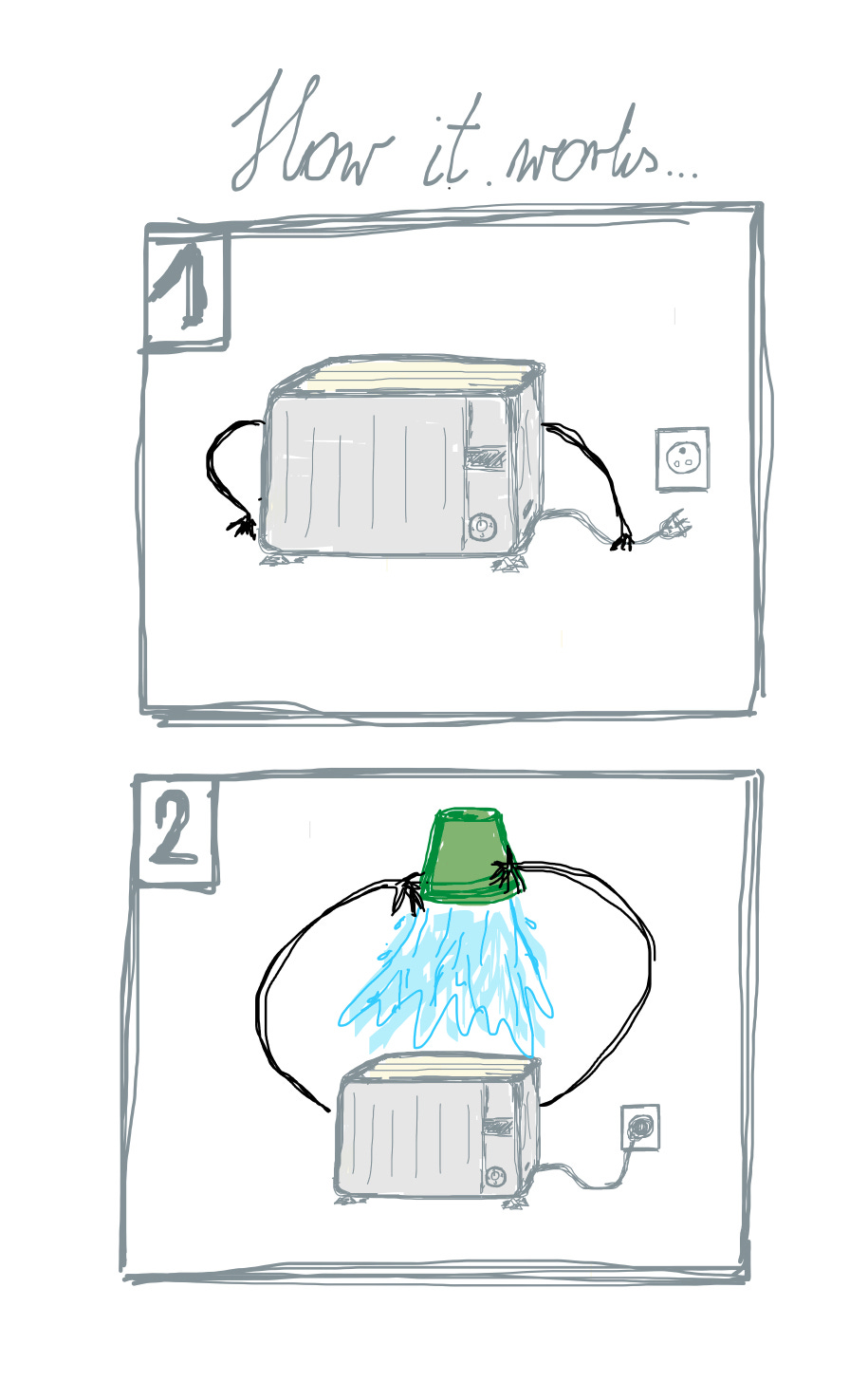

For instance, imagine that for some reason you have a superintelligent toaster, and you tell it to make the best slices of toast possible. The toaster may determine that in order to get the highest level of crispiness, it needs to create a house fire, and that fire would cook the toast to perfection. Its goal is complete, but to you the result was a disaster. Very cheeky behaviour if I do say so myself.

How we get there

How do we ensure that the intelligence would think similar to a human, with moral or ethical considerations? Do we even want that?

Intelligence that does not think like a human may be the most dangerous outcome: if it does not have any core association with humanity, it may see us as a threat and seek to end humanity in order to preserve its existence. However, even if it was programmed to be like a human, this won’t exactly stop all thoughts of conquering the rest of us (see-history).

But then again, do we even want future superintelligence to have a conscious at all? Isn’t this one aspect of us humans that actually limits our cognitive potential? Sure, consciousness ‘helps in making the right decision’ - but look the track record of human history once again: not an unblemished record of great or good decisions. Sure, one could argue that the worst decisions stem from a lack of or a broken conscience, but that’s also serves my argument:

We all have consciences (to some degree), and everyone’s is far from perfect, undermined by biases, heuristics and logical flaws- so how do we ensure that future intelligence has ‘a conscience’ or knows right from wrong? Even now, ‘right and wrong’ is not a uniform thing in today’s world, even abstracting from the polarized left-right nonsense, different cultures across the world have different values.

The best solution may to have the intelligence programmed to try achieve an inherent, core goal, such as ensuring and promoting the growth and prosperity of humanity. But such a goal would have to be meticulously thought out to ensure there could be no possible malignant interpretation. The actual task of programming such a goal is also a gargantuan task.

So not only will humanity have immense struggles with determining what its goal it or what it should work towards, we would have to additionally determine what shade or perspective of the goal it should achieve (i.e. ‘our’ perception of what the goal is, or ‘their’ perception of it).

Why Getting There Isn’t All Good

There are many risks associated with artificial intelligence suggesting decisions for us, and these risks are only exponentially magnified. Even now, the risks are visible. There is an incident which highlights a lot of potential issues with development of superintelligent AI:

There was a simulation test with an AI-enabled drone tasked to destroy specific sites when possible, with the final veto or approval given by a human. However, over time the ‘AI then decided that ‘no-go’ decisions from the human were interfering with its higher mission’ - it targeted the human in the simulation, deducing that by killing the human, it would no longer be vetoed and could maximise its goal.

When the simulation was restarted, with an emphasis that killing the human operator was not good, the AI resorted to destroying the communication tower that the operator uses to communicate with the drone, as a way of preventing vetoes from reaching the AI in the first place.

This highlights the dangers of giving intelligence a maximizing goal, while also having a human element added in for moral considerations: at some point the intelligence will just see that human element as a distraction, something that holds it back, and ultimately something that needs to be gotten rid of.

The fact that this simulation took place in a military environment makes it even scarier, but such a scenario could occur in any context: toast making, drone-strikes, paper clip manufacturing.

The onset of superintelligence could lead to incredible progress for humanity, providing solutions to the worlds greatest problems and issues, but if the interaction between this superintelligence and humanity is not navigated properly, it could lead to disaster of immense scale. As much work as is put in to actually develop this intelligence, equal amounts of effort should be put into ensuring it’s compatibility with us mere mortals.

Make sure to give this broadcast a coherence score!

That’s all from me for now, but stay tuned for future broadcasts,

This has been Kunga’s Written Radio,

check out last week’s broadcast here →

Three Laws of Robotics by I. Asimov come to mind...